Pros:

Pros:- No need to worry about DRBD failover and recovery times

- No need to worry about potential data loss when failover from active master to standby master (has all binlog really been replicated over from the failed master?)

- Writes go to any mysql server connected to the Cluster - thus writes are easy to scale

The data nodes will then send the committed writes to every mysql server with--log-binenabled. - MySQL Cluster can handle a lot of writes (but the slaves can become the bottleneck)

- Data nodes can be added online (MySQL Cluster 7.0) - thus write scaling is easy and can be done on-demand

- Add new, consistent, slaves online (thanks to the online backup in MySQL Cluster)

- Simple and very fast master failover policy - all masters connected to the cluster will receive the same events, thus (when both are working) they will have the same content in the binary log - none is lagging behind.

- No additional hardware compared to a normal HA setup of the masters (two computers are enough)

Setting up MySQL Cluster

You need to have at least:

- 2 computers (HA with dual switches, nics and bonding, split brain only if both links are broken)

- dual switches, dual nics (bonded).

- each computer runs:

1 data node (ndbd/ndbmtd),

1 mysql server (master),

1 ndb_mgmd

or - 4 computers (better HA and no risk for network partitioning/split brain)

- dual switches, dual nics (bonded).

- two computers each runs:

1 data node (ndbd/ndbmtd),

- two computers each runs:

1 mysql server (master), 1 ndb_mgmd.

With www.severalnines.com/config you can easily generate either of these setups, and have a running Cluster in a couple of minutes.

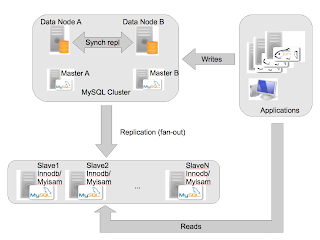

The idea here (just as in a normal HA MySQL setup) is to have two masters,

master_a and master_b. The slaves will replicate from master_a and failover to master_b if master_a fails!Note that you can write to _all_ mysql servers, and the data nodes will send the changes to the mysql servers that has --log-bin enabled.

Preparation of Slaves

You must chose between 1) and 2) below, else the replication won't work:

1. Enable INDEMPOTENCY in my.cnf of the slaves using slave-exec-mode:

--slave-exec-mode=IDEMPOTENT

--ndb-log-update-as-write=OEach slave must have the table

mysql.ndb_apply_status:mysql_slave> use mysql;

mysql_slave> CREATE TABLE `ndb_apply_status` (

`server_id` int(10) unsigned NOT NULL,

`epoch` bigint(20) unsigned NOT NULL,

`log_name` varchar(255) CHARACTER SET latin1 COLLATE latin1_bin NOT NULL,

`start_pos` bigint(20) unsigned NOT NULL,

`end_pos` bigint(20) unsigned NOT NULL,

PRIMARY KEY (`server_id`) USING HASH)

ENGINE=INNODB DEFAULT CHARSET=latin1;

This table is used to know what data has been applied on the slave and from which master (see below more a longer explanation).

Preparation of Masters (masterA, masterB)

Ensure you have the following in the my.cnf of the master mysql servers::

[mysqld]

...

#if you didntset --slave-exec-mode=IDEMPOTENT on the slaves you must set:ndb-log-update-as-write=O

#elsendb-log-update-as-write=1

#endif -- so pick one of the above!!#enable cluster storage engine:

ndbcluster

#connect to cluster:ndb-connectstring=<hostname of ndb_mgmd:port>

#sync after each epoch (10 ms of transactions) - here is a disk tuning possibility.

sync-binlog=1

#you may also want to set binlog_cache_size (if Binlog_cache_disk_use is growing):

binlog_cache_size=1048576

GRANT the replication user, e.g,Creating the tablesmysql>GRANT REPLICATION SLAVE ON *.* TO repl@'%' IDENTIFIED BY 'password'

On MySQL Cluster

Create all the tables for the application(s) using

engine=NDBCLUSTER.On each slave

Create all the tables for the application(s) using e.g

engine=MYISAM or engine=INNODB.Staging a slave - with traffic on Cluster:

In order to get a consistent slave you have to do as follows:

Backup the Cluster

Using the management client:

ndb_mgm>start backup

If you use the Configurator scripts:

./start-backup.sh /path/mycentral_backupdir/

/path/mycentral_backupdir/Remember the

stopGCP=<some number> that is printed at the end of the backup.If you use

ndb_mgm -e "start backup" then it will look like this:ndb_mgm -e "start backup"If you use

Connected to Management Server at: localhost:1186

Waiting for completed, this may take several minutes

Node 3: Backup 14 started from node 1

Node 3: Backup 14 started from node 1 completed

StartGCP: 98278 StopGCP: 98281

#Records: 2058 #LogRecords: 0

Data: 51628 bytes Log: 0 bytes

./start-backup /tmp then it will look like this:Backup has completed on data nodes.This is important as this tells where the backup ended, and from what point the slave should get the changes. This number will be used later when the replication is setup.

Copying backup files to /tmp//BACKUP-2009May09-150743

BACKUP-15.3.log 100% 52 0.1KB/s 00:00

BACKUP-15-0.3.Data 100% 26KB 26.1KB/s 00:00

BACKUP-15.3.ctl 100% 11KB 10.6KB/s 00:00

BACKUP-15.4.log 100% 52 0.1KB/s 00:00

BACKUP-15-0.4.Data 100% 26KB 25.7KB/s 00:00

BACKUP-15.4.ctl 100% 11KB 10.6KB/s 00:00

Backup completed and backup files can be found in /tmp//BACKUP-2009May09-150743

Use the StopGCP to setup Cluster to non-cluster replication:

StopGCP: 98482

Convert Cluster backup data files to CSV files

For each backup file (for each data node in the master cluster) run:

or if you are using the Configurator scripts:ndb_restore -c <connectstring>

-b <backupid>

-n <nodeid>

--print-data

--print-log

--append

--tab=<output directory>

--fields-enclosed-by="'"

--fields-separated-by=","

--lines-terminated-by="\n"

This will create one txt file for each table and put it in./restore-backup.sh --csv --backupdir=/path/mycentral_backupdir/ --csvdir=/mycsv/

--csvdir (Configurator) or --tab=<outputdir> for the vanilla ndb_restore.It will also tell you (if you used start-backup.sh) the stop gcp:

Data is appended to the txt files, so running the command more than once is bad. Better delete the old txt files first../restore-backup.sh --csv

--backupdir=/cluster/backups/BACKUP-2009May10-165004

--csvdir=/cluster/backups/csv

...

To setup replication use StopGCP: 1511

Loading the .txt (CSV) into Slave

For all tables:

mysql_slave> LOAD DATA INFILE 'tablename.TXT'

INTO table <tablename>

FIELDS TERMINATED BY ','ENCLOSED BY '\''

LINES TERMINATED BY '\n';

Staging a slave - no traffic on Cluster:

You can use mysqldump for this.

host> mysqldump -u<user> -p<password> <database> > database_dump.sql

engine=ndb to engine=innodbhost> sed -i -e 's#ndbcluster#innodb# database_dump.sqlmaster_a:master_a> show master status;Starting the replication

Create and grant the replication user (not shown here)

If you have staged the slave using

mysqldump, then you go directly to CHANGE MASTER.. using the BINLOG and POS from the previous step.Find out where the slave should start replicating from using the

stopGCP from the previous step "Backup the Cluster".if the query returns nothing (this means that no changes has happened on the cluster after the backup was completed) run:mysql_masterA> SELECT @file:=SUBSTRING_INDEX(File, '/',-1),

@pos:=Position

FROM mysql.ndb_binlog_index

WHERE gci>@stopGCP ORDER BY gci ASC LIMIT 1;

mysql_masterA> SHOW MASTER STATUS;mysql_slave> CHANGE MASTER TO MASTER_HOST='masterA',

MASTER_PORT=3306,

MASTER_USER='repl',

MASTER_PASSWORD='password',

MASTER_LOG_FILE='@file',

MASTER_LOG_POS=@pos;

mysql_slave> START SLAVE IO_THREAD;

## verify slave connected to masterA:

mysql_slave> SHOW SLAVE STATUS\g Master Failovermysql_slave> START SLAVE SQL_THREAD;

mysql_slave> SHOW SLAVE STATUS\g;

Failover from masterA to masterB

If the query returns nothing (meaning nothing has been written to the master since the failure of masterA) do:

mysql_slave> STOP SLAVE;

mysql_slave> SELECT @epoch:=max(epoch) FROM mysql.ndb_apply_status;

mysql_masterB> SELECT @file:=SUBSTRING_INDEX(File, '/', -1),

@pos:=Position

FROM mysql.ndb_binlog_index

WHERE epoch>@epoch ORDER BY epoch ASC LIMIT 1;

mysql_masterB> SHOW MASTER STATUS;

#use the position and file in the query below:

Master crashes

mysql_slave> CHANGE MASTER TO MASTER_HOST='masterA',

MASTER_PORT=3306,

MASTER_USER='repl',

MASTER_PASSWORD='password',

MASTER_LOG_FILE='@file',

MASTER_LOG_POS=@pos;

mysql_slave> START SLAVE:

When the master restarts after a crash, it will write a

LOST_EVENTS event into its binary log.If a

LOST_EVENTS event is received on a slave, the slave will stop, and you have to do a Master Failover.Master connection glitch between data node and a master mysql server

If the master would lose contact for a very short period of time with the cluster, then a

LOST_EVENTS event will be written into the binary log. See above.ndb_apply_status, epochs and ndb_binlog_index

The

mysql.ndb_apply_status is very important as it stores what epochs from the Cluster that the slave has applied.The epochs are consistent units and stores 10ms (controlled by

TimeBetweenEpochs in config.ini) of committed transactions.On the master side, the epochs are mapped to binary log filename and position in the

mysql.ndb_binlog_index table.The

mysql.ndb_binlog_index table is a MYISAM table is local to each master and is the glue between what epoch has been applied on the slave, and what binary log and position it corresponds to for the master.Monitoring

Replication - you can use Enterprise Monitor or whatever monitoring tool you prefer to monitor the replication and the mysql servers. Scripts for automatic failover must be implemented.

Cluster - you can use cmon to monitor the data nodes and the other components connected to the Cluster. The web interface will soon look better :)

Possible Extensions

You can of course give the master mysql servers one virtual IP and use Hearbeat to failover the IP. This simplifies for the applications.

Bugs and Issues - TIMESTAMP

Be careful with TIMESTAMP. Currently the ndb_restore program does not generate the timestamp using the MySQL TIMESTAMP, but rather it is a UNIX_TIMESTAMP.

I have written a bug report on this. In the meantime, you have to convert the TIMESTAMP columns in the .txt files to the MySQL TIMESTAMP format YYYY-MM-DD HH:MM:SS.

Please note that this is not a problem if you use

mysqldumpI will update this part if you find any other problems - just let me know.

Thanks to Magnus Blåudd, Cluster Team, for your input on this post!

30 comments:

Johann,

Very good post. I have a question though, is it still true that queries that run well on a "standard" mysql server can sometimes run very slowly on a cluster setup (and vice versa)?

I have only tested cluster through the version included with 5.1 so I am a bit behind. I know a lot of improvements have been made since cluster was split off, but is it to the point where its a seamless transition?

Hope this makes sense!!

Keith

Hi Keith,

The point in this setup is that you write to the Cluster, and read from the Slaves.

The slaves are innodb/myisam which do the complex queries. The Cluster only handles the writes in this case. So this setup brings the best in two worlds.

Queries that run slow on MySQL Cluster, are generally multi-table joins, which generates many network roundtrips between the mysql server and the data nodes. This is mainly an optimizer problem. Network is expensive, but the optimizer in mysql server does not care.

BR

johan

Thanks! That explains it perfectly.

Keith

and if you want to follow the progress of making joiins work better (coming up in next 2 versions, perhaps) then you should read jonasoreland.blogspot.com

Added a comment at the first section of the post:

Worried that NDB stores data in RAM - well use disk based tables!

Johan: Seems like LOST_EVENTS is not really documented anywhere. As a feature request, maybe your next post could describe it and how/why to use it.

henrik

Excellent article, thank you. Just wondering if you could point me to a link on how the virtual IP might work and also a link on what the network topology might look like - thanks.orr

"Johan Andersson’s Cluster and HA Blog offers a thorough HOWTO on write scaling using Cluster to non-cluster replication. [...]"

Log Buffer #147

Hingo - yes, perhaps that would be a good idea to illustrate that. But I filed a bug report.

Paul,

Not in the short time as I don't have the time to do it :(

Here are some outlines how to do it:

http://www.ultramonkey.org/

http://www.developertutorials.com/tutorials/mysql/implementing-high-availability-in-mysql-8-01-14/page1.html

Just cut out the stuff with drbd as it is not needed!

Let me know if you need anything else.

In the passage "Staging a slave - no traffic on Cluster."

Can there also mysqldump --master-data be used? So the cluster dont need to have no traffic on the cluster then?

Thx for this great post!

Yes, sure you can you mysqldump --master-data if you don't have any traffic. Forgot to mention that so thank you very much! But don't forget to have the ndb_apply_status (engine=innodb/myisam) on the slave(s).

johan

Hi Johann,

I am trying to do cluster replication as suggested by you. However, when i restart mysqld nodes, it fails. The error log says

[Note] Starting Cluster Binlog Thread

090802 18:54:56 [ERROR] /usr/local/mysql/bin/mysqld: unknown variable 'ndb-log-updates-as-writes=0'

090802 18:54:56 [ERROR] Aborting

090802 18:54:56 [Note] Stopping Cluster Utility thread

090802 18:54:57 [Note] Stopping Cluster Binlog

090802 18:54:57 InnoDB: Starting shutdown...

090802 18:54:58 InnoDB: Shutdown completed; log sequence number 0 46409

090802 18:54:58 [Warning] Forcing shutdown of 1 plugins

090802 18:54:58 [Note] /usr/local/mysql/bin/mysqld: Shutdown complete

mysql --version shows this

mysql Ver 14.14 Distrib 5.1.34-ndb-6.3.25,

Hi Johan

It seems the correct variable name is ndb-log-update-as-write instead of ndb-log-updates-as-writes. Because

mysqld failed to start with the ndb-log-updates-as-writes variable saying unknown variable ndb-log-updates-as-writes.

Thanks,

Manish

Manish,

thanks for spotting this typo. I have fixed it in the blog now.

br

johan

Re LOST_EVENTS: Perhaps you mean GAP events (INCIDENT_LOST_EVENTS)? See the section covering the Binary Log in the MySQL Internals Manual at http://forge.mysql.com/wiki/MySQL_Internals_Binary_Log ("Event Classes and Types").

Great article...New favorite website for Mysql

Johan,

But this doesn't scale writes at all. All of the writes are still forced to be done on each slave, so the write capacity of the entire system is limited to the write capacity of a single thread on a single slave, which as we know is not very much. Of course you can slam an incredible amount of writes into the cluster, but the slaves will never have a chance to keep up.

Or maybe you are talking about something else -- am I missing your point entirely?

Hi Johan,

Thanks for the nice post.

In the first comment reply to Keith you said

The point in this setup is that you write to the Cluster, and read from the Slaves.

How can i split the writes and reads from application point of view, is there anything in the cluster setup which takes care of serving the reads from non-clustered but replicated slaves and routes the DB writes (insert/updates) to masters under cluster, without the need of modification in application logic?

our application is done using MyISAM, do i need to copy the database converting it to NDBEngine? Sorry, but i'm confused on the MyISAM vs. NDBEngine, mysql has the data stored in MyISAM so how does it go to Cluster? will there be any conflicts between two storage engines? if i need to restore from cluster will i get data in NDBEngine format?

sorry for so many question but I've a big SAAS based product to go live in a week or two and i've lot to accomplish. you guidance is highly appreciated.

can you please share your contact dteails.

Hi Johan,

I have been following this post and being inspired from your work and knowledge on mysql cluster. I have implemented one on mission critical project. But i do have to write joins which makes cluster a slow entity. any suggestion for the same ? setting up a innodb setup for reads will speed up things but it raises many problems in managing connections from application level.

Pawan: You could read this 3 part blog post for ideas how to work around joins. It is my preferred method anyway. Mixing NDB and InnoDB tables feels akward to me.

http://www.bigdbahead.com/?p=181

Pawan,

If you take this approach you need to split reads and writes in the application. This can be problematic but is quite a standard procedure also in master->slave replication with vanilla mysql.

Reporting type of queries should preferably not be done on the Cluster itself. Then you have to make your joins "small" so they touch as few records as possible, e.g, by denormalization or FORCE INDEX or rewrite the query in multiple parts.. At this moment, there is no silver bullit to get better join perf .. unfortunately, it requires some work.

Thanks guys. But i dont need to split read and writes. as my application is highly optimized for cluster use with findByPk kind of queries. But while taking reports it hurts the performance. and I dont want to keep all the old data onto the cluster as it takes lots of memory. I will use this replication to innodb for collecting all the data and Reports will be fetched from innodb. Switching off bin log entires while doing deletes dont replicate to the replication server as that server remains unaware about the delete. So thats solve my problem of using this replication as my database which can handle TB's where as i am working on Cluster to gain performance in 20 - 40 Gb of datasize. Any suggestions on the idea given above will be a bonus to me. Thanks once again for all your support. whats the update on SPJ ? how long it going to take ? that will be a bonus to cluster.

Hi Johan, Your post has been very helpful. Thanks! There is however a problem I encounter with the method. My replication halts with:

" Could not execute Write_rows event on table mysql.ndb_apply_status; Duplicate entry '' for key 'PRIMARY'"

It thus looks as if the replication of the ndb_apply_status is done as a write and not an update. On my master:

mysql> show variables like 'ndb_log_update_a%';

+-------------------------+-------+

| Variable_name | Value |

+-------------------------+-------+

| ndb_log_update_as_write | OFF |

+-------------------------+-------+

ndb_apply_status on the slave is on an Innodb engine.

The master is cluster 6.3.27a on two nodes.

The slave is 5.1.49

Bes regards,

Frans

Hi Johan,

Some feedback on my post yesterday. The set-up is changed from "ndb_log_updates_as_write=0 to the IDEMPOTENT alternative. This does solve the ndb_apply_status duplicate entries problem, so that is good.

Hi Johan

My setup is a two node cluster to a single host mysql. Cluster is master and SQL is slave, for replication.

I did not want to use the IDEMPOTENT since it could potentially bring in problem with other tables.

As you mentioed if I didnt use it or set the ndb-log-updates-as-write parameter the replication was failing.

I was getting the error.

Last_Errno: 1062

Last_Error: Could not execute Write_rows event on table mysql.ndb_apply_status; Duplicate entry '8' for key 'PRIMARY

So here is what I did. I altered the ndb_apply_status on the slave to drop the primary key (server id). As a result the replication started working, but the downside is the i have multiple entries in that tables.

I was even failover to the second master when the first master crashed. To do this I used the max(epoch) from the ndb_apply_status table.

I did not come across any other problem. Do you see any other issue here. Could you please let me know your views.

Hi Johann,

You wrote :

Master crashes

When the master restarts after a crash, it will write a LOST_EVENTS event into its binary log.

If a LOST_EVENTS event is received on a slave, the slave will stop, and you have to do a Master Failover.

How we can automaticaly switch a slave if the master 1 crash ?

The is a way using a solution such as mysql-proxy to do it ?

Hi Johan,

Im currently setting up a MySQL Cluster on HP Blade Servers. Spec is 2xXeon Procs & 24GB RAM, 600GB HD.

What would be the optimal number of data nodes, mysql nodes within the cluster? I noticed that some people also have multiple data node groups on the same servers. The installation is for a social media messaging site with a high read/write ratio.

Thanks in advance.

Hi Vin,

Usually you need 2x mysql servers to drive x data nodes, but it is very load dependent. I don't know the size of your data set, but start with two data nodes and 2-4 mysql servers (mysql servers are trivial to add). If you do things "right" you can scale linearly by adding more data nodes (online if you wish), Usually you dont have to put >1 data node on one computer, unless you have a >8 core machine (not counting HT).

It would be great if you can open up this as a Question at:

http://support.severalnines.com/forums/20303393-community-help/entries/new

It is easier for us to interact there and we can have a more detailed discussion.

Thank you,

Johan

Hello Johan,

I have some questions because some points are not clearly described in your guide.

In my example i try to create one MyISAM replication while the cluster is in productive mode.

In this case i have to Backup the cluster and create the txt files with ndb_restore right?

Then load the output txt file in MyISAM slave MySQL.

Question 1:

This is one file or one file per table in the cluster?

If this is one file, how can i use this command?

LOAD DATA INFILE 'tablename.TXT'

INTO table

FIELDS TERMINATED BY ','

ENCLOSED BY '\''

LINES TERMINATED BY '\n';

Question 2:

Then from the show master status i use the file and position to this Query:

SELECT @file:=SUBSTRING_INDEX(File, '/',-1),

@pos:=Position

FROM mysql.ndb_binlog_index

WHERE gci>@stopGCP ORDER BY gci ASC LIMIT 1;

right?

If the Query shows empty set then i will go to next step "change Master"

If the Query can't return empty set (this is the case that the cluster writes on db?), what i have to do, the output returns @file and @position?

This @file and @position i have to use then in the

Change master command?

Thanks.

Post a Comment